1.4📐 Mathematical Fundamentals Behind LLMs: Linear Algebra, Probability & Optimization

💡 AI is powered by math. But don’t worry—we’ll make it painless. LLMs like GPT-4, Claude, and Gemini may seem like magic, but at their core, they’re just gigantic mathematical engines.

But how does math make AI work?

In this guide, Obito & Rin will break it down:

✅ Linear Algebra → How AI represents and manipulates words as numbers

✅ Probability → How LLMs predict the next word with confidence

✅ Optimization → How models learn and improve over time

Let’s jump in!

🔢 Linear Algebra: How LLMs See the World

👩💻 Rin: "Obito, I get that AI uses numbers, but how do words become math?"

👨💻 Obito: "Everything in an LLM is represented as vectors and matrices—thanks to linear algebra."

👩💻 Rin: "You lost me at matrices."

👨💻 Obito: "Think of a vector as a list of numbers that represent a word’s meaning. The AI doesn’t see words—it sees mathematical relationships between them."

📌 Example: Word Embeddings (Vector Representations)

"King" → [0.23, -1.02, 0.78, ...]

"Queen" → [0.21, -0.95, 0.80, ...]

"Apple" → [1.15, 0.24, -0.43, ...]👩💻 Rin: "Wait—so similar words have similar vectors?"

👨💻 Obito: "Exactly! That’s why LLMs can understand synonyms and relationships."

📊 Matrix Operations: The Core of AI Computation

👩💻 Rin: "Okay, but how does AI process these vectors?"

👨💻 Obito: "By using matrices—big grids of numbers that store and transform data."

📌 Example: A Simple Matrix Multiplication in an LLM

[1, 0, 2] x [0.5, 1] = [2.5, 3] [3, 1, 4] [1, 2] [6.5, 9]👩💻 Rin: "So the AI does millions of these calculations per second?"

👨💻 Obito: "Yep! Every layer in a neural network is just a giant matrix operation."

🎲 Probability: How LLMs Predict the Next Word

👩💻 Rin: "Okay, but how does an LLM decide what word to generate next?"

👨💻 Obito: "That’s where probability and statistics come in. The model assigns a probability score to each possible next word and picks the most likely one."

📌 Example: Predicting the Next Word

"The cat sat on the..."

→ "mat" (85% probability) ✅

→ "floor" (10% probability)

→ "dog" (5% probability)👩💻 Rin: "So AI doesn’t just pick a word—it calculates which one makes the most sense?"

👨💻 Obito: "Exactly! And if you change temperature settings, you can make AI responses more creative or deterministic."

📉 Optimization: How LLMs Learn & Improve

👩💻 Rin: "Alright, but how do these models learn? They start off dumb, right?"

👨💻 Obito: "Yep! When first trained, an LLM is just a random mess of numbers. It learns using optimization techniques like gradient descent."

👩💻 Rin: "Sounds fancy. What’s gradient descent?"

👨💻 Obito: "It’s how AI adjusts its weights to make better predictions—like a GPS finding the fastest route."

📌 How Gradient Descent Works:

1️⃣ The model makes a prediction

2️⃣ It calculates how wrong it was (loss function)

3️⃣ It adjusts its weights to reduce the error

4️⃣ Repeat millions of times until the model gets good

👩💻 Rin: "So AI is just fine-tuning millions of numbers until it gets things right?"

👨💻 Obito: "Bingo!"

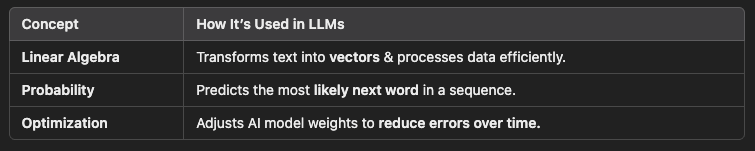

🏆 Bringing It All Together: How Math Powers LLMs

👩💻 Rin: "Okay, let’s connect the dots. How do these math concepts power LLMs?"

👨💻 Obito: "It all comes together like this:"

👩💻 Rin: "So AI is just a giant math engine crunching numbers at scale?"

👨💻 Obito: "Exactly! LLMs are built on pure math and computation."

🎯 Final Takeaways: Math Is the Backbone of AI

✅ Linear Algebra powers how AI stores and processes words as numbers.

✅ Probability helps LLMs predict the most likely next word.

✅ Optimization (Gradient Descent) fine-tunes AI to improve accuracy over time.

👩💻 Rin: "Wow, so AI is all about numbers, probabilities, and continuous learning?"

👨💻 Obito: "Yep! No magic—just a LOT of math."

👩💻 Rin: "This changes how I see AI. It’s not thinking—it’s just crunching numbers!"

👨💻 Obito: "Exactly! And in Part 5, we’ll break down how Transformers use these concepts to process language."

🔗 What’s Next in the Series?

📌 Next: The Transformer Architecture—How LLMs Process Language

📌 Previous: Understanding Neural Networks: The Foundation of LLMs

🚀 Want More AI Deep Dives?

🚀 Follow BinaryBanter on Substack, Medium | 💻 Learn. Discuss. Banter.